What Decisions Should AI Make Without Us?

As AI systems influence hiring, lending, and healthcare, the question isn’t capability, it’s accountability.

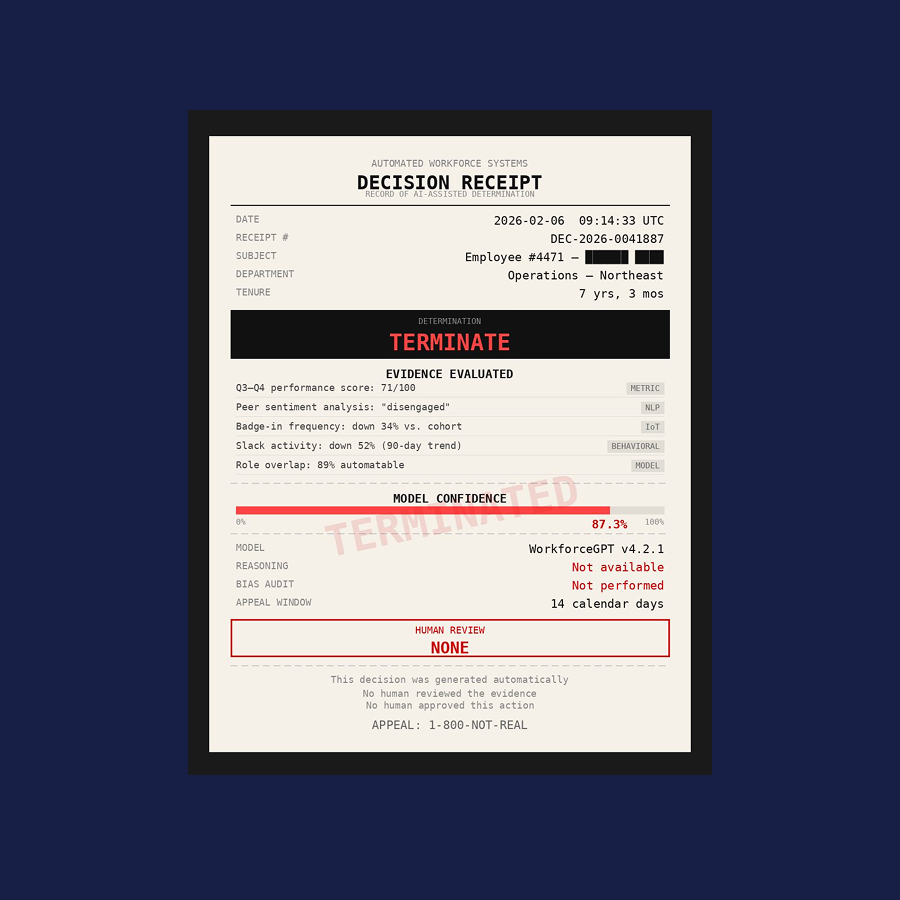

I posted that question alongside an image of a “decision receipt.” An employee with seven years of tenure. Performance metrics. Slack activity down. Badge frequency down. Model confidence: 87.3%.

Determination: TERMINATE.

Reasoning: Not available.

Human review: None.

The reaction was immediate. Some described it as dystopian. Others raised GDPR and regulatory concerns. Several simply responded to the original question: “None.”

What people were responding to wasn’t the existence of AI. It was the absence of ownership.

No one argued that machines can’t process signals faster than humans. No one denied that automation increases efficiency. The reaction came from something deeper: a consequential outcome produced without visible reasoning, context, or accountable authority.

We are in a period where AI is moving from analytical assistance to operational influence…quickly. It is no longer confined to dashboards and recommendations; it is increasingly shaping hiring pipelines, credit assessments, healthcare prioritization, fraud adjudication, access controls, and capital allocation decisions.

The real question is not whether AI will assist. It already does. It is where AI assistance ends, and our authority begins.

AI Influence Extends Beyond the Final Decision

Much of the public debate focuses on extreme examples: termination, sentencing, medical triage. And of course, humans must be involved in decisions like these. But that framing is too narrow.

AI doesn’t have to directly terminate employment to materially affect someone’s life. It only needs to shape the environment in which those decisions are made. An incorrect revenue forecast can lead to budget reductions. Budget reductions lead to hiring freezes. Hiring freezes ultimately lead to layoffs. The model didn’t execute the final action, but it influenced the chain of events. That influence compounds.

As AI systems increasingly shape forecasts, performance indicators, and strategic assumptions, they begin to affect opportunity, mobility, and growth. The downstream impact may not be immediate, but it is real.

There are clearly domains where automation creates value. Routing service tickets, flagging anomalies, detecting fraud in milliseconds, or prioritizing high-volume workflows are bounded, rules-constrained problems where speed and consistency improve outcomes.

But once AI outputs begin influencing planning, evaluation, allocation, or opportunity, oversight cannot be optional.

Human-in-the-Loop AI Governance and Decision Accountability

Human-in-the-loop is often invoked as a safeguard, but rarely defined as infrastructure.

In practice, this means that a human reviews AI outputs before taking any material action, that the underlying reasoning and data pathways are visible, that recommendations can be challenged or overridden, and that responsibility for the final outcome is explicit and attributable.

Without those elements, oversight becomes ceremonial. Authority quietly shifts from people to systems.

AI optimizes for measurable signals. Humans interpret context.

A model may detect a 52% drop in Slack activity. A manager may know that the individual is mentoring new hires or working on a strategic initiative that doesn’t generate digital exhaust. A model may signal productivity decline. Leadership may understand that a deliberate pivot is underway. A system may recommend cost reductions. Humans must weigh the long-term capability, morale, and execution risk embedded in that decision.

Institutional knowledge does not live solely in structured data. It lives in judgment. And judgment requires context.

Compliance, Traceability, and the Regulatory Reality

There is also a regulatory dimension that enterprises cannot ignore.

From the EU AI Act to financial services model risk guidance to employment and credit regulations, organizations are increasingly expected to demonstrate how AI-assisted decisions were formed. In lending, adverse action notices require explanation. In banking, model risk frameworks demand traceability and validation. In workforce analytics, automated decision-making can trigger disclosure and oversight obligations. AI influence without traceability is not merely a governance gap; it is a compliance exposure.

Every AI-assisted decision generates context: what triggered the inquiry, which data sources were accessed, how signals were weighted, what trade-offs were considered, and who approved the outcome. If that context is not captured and owned, it fragments across tools and vendors. Decisions become ephemeral. When assumptions change or outcomes are challenged, organizations struggle to reconstruct the reasoning.

Human-in-the-loop without preserved decision context does not scale. It erodes under operational pressure.

So what decisions should AI make without us? In high-impact domains, very few.

AI should inform, surface patterns, quantify trade-offs, and simulate scenarios. But final authority and the reasoning behind it must remain visible, defensible, and owned. Because the true test of enterprise AI is whether we can stand behind the decisions that follow, to employees, customers, shareholders, and regulators alike.

Building Human-in-the-Loop Into Enterprise AI Infrastructure

At Axonis, we believe human-in-the-loop cannot be a workflow checkbox; it must be part of the infrastructure. That means the system itself must preserve decision context, enforce policy at runtime, and make human attestation explicit and durable.

Axonis Decision Intelligence provides the foundation for this by operating directly in the execution path where data is accessed, models run, and actions are taken. Every AI-assisted decision can be captured as a governed record linking data inputs, policy controls, machine reasoning, and human approval. When human oversight is embedded at the architectural layer, not added after the fact, organizations can scale AI without surrendering authority, accountability, or institutional knowledge. That foundation is what makes human-in-the-loop sustainable and ultimately, what makes enterprise AI defensible.

If you need to make human-in-the-loop part of your decision infrastructure, let’s talk.