AI Trust & Safety Health Care 2026

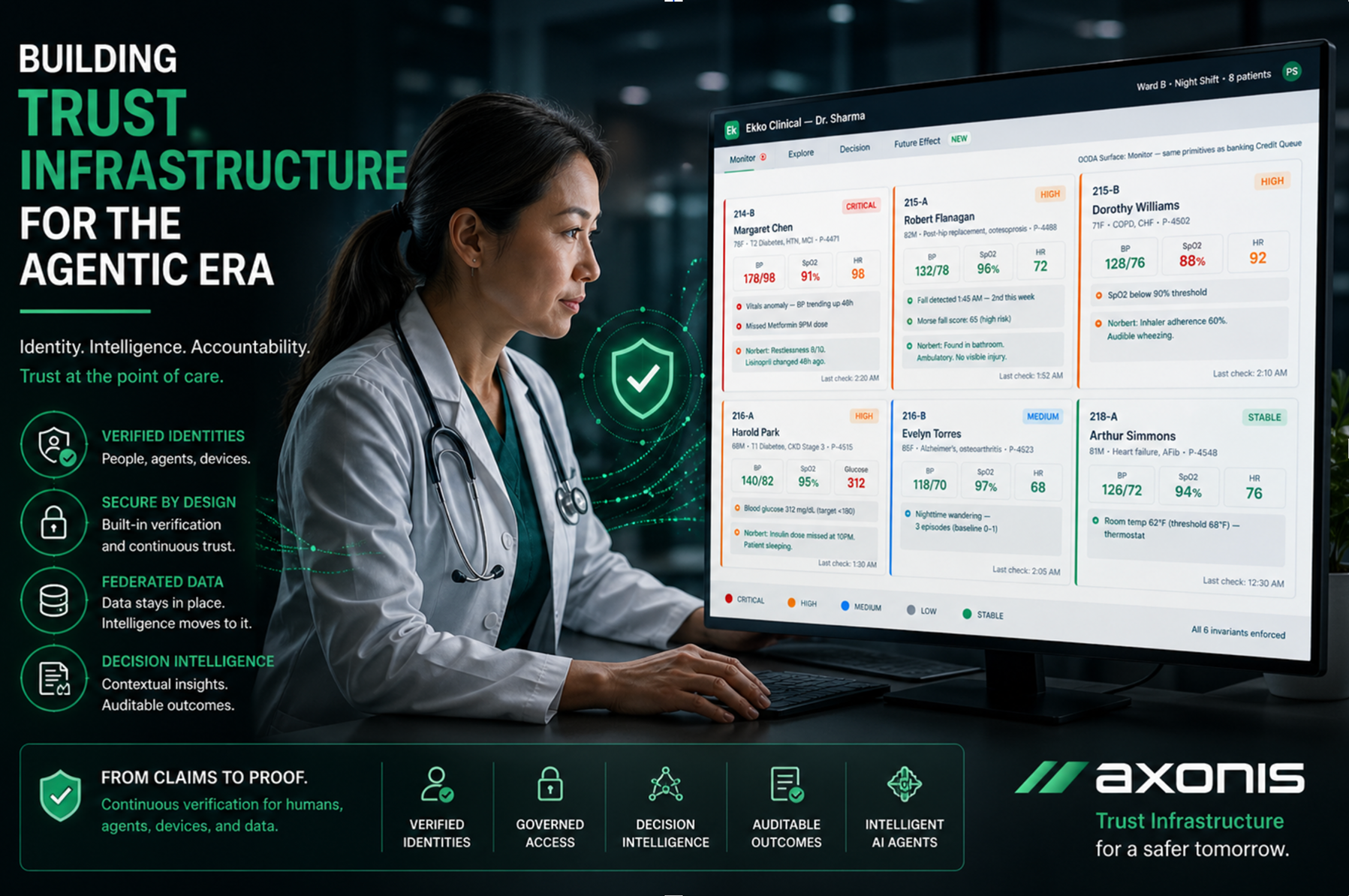

We brought together Vince Albanese (CEO, EKKO | Board Member, DirectTrust) and Axonis CCO Sheth Sanket to talk about what’s changing and what’s at risk as AI moves into real-world clinical and operational decisions.

Vince Albanese has spent more than four decades working at the intersection of technology and highly regulated industries. Today, as CEO of EKKO and Board Director at DirectTrust, he is helping shape the future of trust in healthcare. But his motivation is deeply personal.

After surviving a cancer diagnosis in 2013, Vince says the experience changed how he sees the stakes of technology in healthcare. Alongside his 45-year career in enterprise IT and 15 years working on patient interoperability, it has shaped a deeply personal mission: helping build safer, more trusted systems for the next generation. In a recent conversation with Axonis Chief Customer Officer Sheth Sanket, Vince framed the current moment. AI isn’t just another technology wave like mobile or the internet. It’s a convergence of forces happening at unprecedented speed that is creating both extraordinary opportunity and profound uncertainty.

And for Vince, the stakes are high. “At this stage of my life,” he shared, “my goal is to protect my children and grandchildren from the uncertainty of what’s coming next.” That perspective is what makes this conversation different. This isn’t about hype or speculation. It’s about what has to be true for AI to be trusted, especially in environments like healthcare, where decisions aren’t just operational, they’re life-altering.

Because while AI is advancing rapidly, the foundations required to deploy it safely - identity, trust, governance, and accountability - are still being built.

From Claims to Proof

For years, digital systems have operated on claims. Usernames and passwords. Security questions. Even biometrics like voice and facial recognition. These mechanisms were designed for a world where impersonation was difficult and expensive. That world no longer exists. AI has made it trivial to replicate identity. Voice, face, behavior... everything can be simulated. As Vince puts it: “Claims are not sufficient anymore.”

This isn’t speculation. It’s already reflected in emerging standards and guidance, including NIST recommendations that call for cryptographic proof of identity. In other words, systems must move beyond “trust me” to verifiable, continuous validation of who or what is interacting. Even the conversation between Vince and Sheth, over video, could theoretically be an AI-generated impersonation. That is the new baseline.

Why Healthcare Raises the Stakes

Nowhere is this shift more critical than in healthcare. Here, AI-driven decisions are not about convenience or efficiency. They are about diagnosis, treatment, and care. Lives depend on the integrity of information and the trustworthiness of those interacting with it. At the same time, healthcare data is among the most exposed. Breaches have made it easier than ever to reconstruct identities. The cost of simulating a real patient or provider is pennies on the dollar. This creates a dangerous combination: high-stakes decisions built on increasingly unreliable signals of trust.

Healthcare also still runs on fragmented communication, including pagers, phone calls, and disconnected systems. As Vince notes, one of the clearest indicators of inefficiency is the number of phone calls required to get answers. “If you can count and reduce the number of phone calls, you’re truly making an impact in a healthcare setting.” He points to the reality that even today, critical workflows rely on outdated patterns. A page goes out. It triggers a call. That call leads to another call, because the context isn’t there, the identity isn’t verified, and the information isn’t accessible in a trusted way.

“What do you have to do when you receive a page? You pick up a phone… you need more information. That call leads to another phone call for additional context and verification.” Until identity, context, and data can move securely and seamlessly between parties, healthcare will continue to operate in fragments rather than as a coordinated, intelligent system. AI has the potential to change that. But only if trust is built into the system from the start.

Regulated Intelligence

The instinctive response to AI has been to centralize data. Aggregate everything into a single system, then run intelligence over it. But this approach creates new risks: massive honeypots of sensitive data, increased attack surfaces, and reliance on third parties to manage what should never leave its source. The alternative is a different model: keep data where it lives, and move intelligence to it. This is the foundation of regulated AI aka federated AI.

Instead of pulling data into centralized systems, intelligence operates locally. Only the necessary outputs such as context, insights, decisions are shared. This reduces exposure, preserves privacy, and enables scale without compromising control. It also aligns with how trust should work in an AI-driven world.

Identity-Bound Infrastructure

Federation alone is not enough. It must be paired with a new layer of infrastructure built around identity. And not just human identity.

In the agentic era, systems must verify:

- Humans making decisions

- AI agents executing tasks

- Devices generating data

- Robots interacting with environments

Every participant must have a verifiable, cryptographic identity. Every interaction must be authenticated continuously, not just at login. On top of that, decisions must be governed by context and policy. Who is allowed to access what data? Under what conditions? For what purpose? And critically, a human must remain accountable.

Standards as the Accelerator

If AI is to scale safely, it will require standards. Full stop. Historically, standards have been seen as slow-moving and bureaucratic. But in reality, they are what enable speed at scale. Protocols like TCP/IP are what made the internet possible. AI now needs similar foundations:

- Standards for identity proofing

- Standards for agent behavior

- Standards for decision intelligence and auditability

- Standards for ethical use

Without them, every system becomes a silo and trust becomes impossible to verify.

Building the Alliance

AI will transform healthcare. It will reduce friction, improve outcomes, and unlock entirely new capabilities from robotics in care environments to real-time, contextual decision support. But no single company can drive the standards needed to ensure its success. What’s required is an industry-wide effort and an alliance of technology providers, healthcare organizations, regulators, and academic institutions working together to define and implement the infrastructure for trusted AI.

Axonis is helping lead this shift, collaborating with experts like Vince Albanese and EKKO to bring federated AI, identity-bound infrastructure, and decision intelligence into regulated environments. Together, we are helping establish the foundation for how AI can be safely deployed, governed, and trusted where it matters most.

For more information on ID Proof, connect with the folks from EKKO. To unlock AI safely in regulated markets or learn more about Decision Intelligence, talk with Axonis.